GEO Analytics: Essential Metrics to Track for AI Search Visibility

GEO Analytics

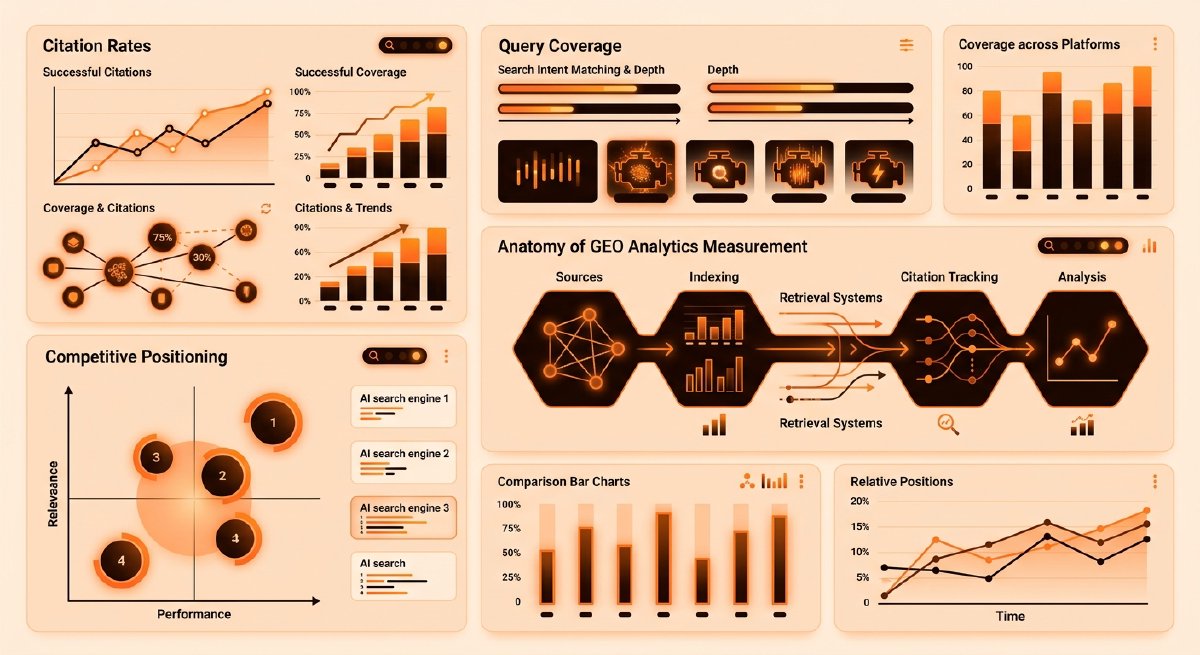

TL;DR:

- Citation rate - the percentage of AI engine responses citing a domain’s content - is the primary GEO metric; track it by query, domain, and competitor.

- Citation position - where content appears in the response (first, middle, last) - correlates with click-through and user trust.

- Query coverage - how many relevant queries cite any content - reveals gaps in a GEO strategy.

- Traffic quality - time-on-page, scroll depth, and conversion rate from AI citations - proves citation value beyond vanity counts.

- Competitive share - a domain’s citation rate vs. competitors’ - shows whether GEO efforts are gaining ground or losing share.

- Attribution window - the lag between content publication and first AI citation - helps forecast visibility timelines.

Why GEO metrics matter differently than SEO

SEO metrics are well-established: rankings, impressions, clicks, CTR. Generative Engine Optimization is younger, and the measurement landscape is still forming. But the stakes are identical - if AI engines are answering user queries without sending traffic to a site, content is invisible where it matters most.

The Princeton/Georgia Tech/IIT Delhi study on GEO (KDD 2024) established that generative engines can boost content visibility by up to 40% through targeted optimization. But that visibility is meaningless if teams can’t measure it. The metrics below are the ones that translate GEO effort into business outcomes.

1. Citation rate - the primary GEO metric

What it is: The percentage of AI engine responses (across ChatGPT, Claude, Gemini, Perplexity, Grok, and others) that cite at least one piece of a domain’s content.

Why it matters: A citation is the GEO equivalent of a ranking. It’s the moment an AI engine decides content is authoritative enough to surface to a user. Unlike SEO rankings, which are binary (a domain ranks or it doesn’t), citations are probabilistic - the same query may cite different sources on different days, and different engines cite different sources for the same query.

How to track it:

- By query: Monitor a representative set of 50-200 queries relevant to the business. For each query, run it through major AI engines weekly and log which sources are cited. Calculate: (queries citing the domain’s content / total queries monitored) × 100.

- By domain: Aggregate citation rate across all monitored queries to get a baseline for the site.

- By competitor: Run the same queries and track competitor citation rates. A competitor with 35% citation rate on shared queries is outperforming a 20% rate.

Benchmark: Early GEO research suggests citation rates of 15-25% on relevant queries are solid; 30%+ is strong. Rates below 10% suggest content gaps or visibility issues.

Tool note: Zeover’s GEO analytics engine crawls AI responses and extracts citations automatically, but manual spot-checking remains valuable for validation.

2. Citation position - where in the response matters

What it is: The ordinal position of content within an AI engine’s response. First mention, middle of the response, or relegated to a supporting link at the end.

Why it matters: Position correlates with user attention and trust. A source cited first in a Claude response gets more cognitive weight than one buried in the middle. Users are more likely to click a first-cited source, and AI engines themselves may weight first-cited sources as more authoritative.

How to track it:

- Log the position of citations in each response: position 1, 2, 3, etc.

- Calculate average position across all citations: lower is better.

- Track position distribution: what percentage of citations are in the top 3 positions vs. lower?

Benchmark: If average citation position is 2.1 across 100 responses, that’s strong. If it’s 4.5, content is being cited but not prioritized.

Interpretation: A rising average position (e.g., 3.2 → 2.8 over a month) suggests content is gaining authority. A falling position (2.5 → 3.8) may indicate new competitors or content freshness issues.

3. Query coverage - breadth of visibility

What it is: The number of distinct queries (out of a monitored set) that cite at least one piece of a domain’s content.

Why it matters: Citation rate reveals depth on individual queries. Query coverage reveals breadth. A site with 25% citation rate on 100 queries might be cited on 25 queries consistently, or on 50 queries at 12.5% each. The latter is more resilient - if one query’s citation drops, others compensate.

How to track it:

- Count unique queries that cite the domain’s content: this is query coverage.

- Calculate coverage rate: (queries citing the domain’s content / total monitored queries) × 100.

- Segment by topic: which product categories, use cases, or regions have the strongest coverage?

Benchmark: 40-50% query coverage on a well-chosen 100-query set is solid. 70%+ is exceptional.

Interpretation: Gaps in coverage reveal content opportunities. If competitors are cited on 60 queries and a domain is cited on 35, the 25-query gap is a roadmap for new content or optimization.

4. Traffic quality from AI citations - the conversion lens

What it is: Metrics that measure the value of traffic arriving from AI engine citations: time-on-page, scroll depth, pages-per-session, conversion rate, and bounce rate.

Why it matters: A citation is only valuable if it drives engaged traffic. High-quality traffic (users who spend 3+ minutes on page, scroll past the fold, convert) proves the citation is working. Low-quality traffic (bounce in 10 seconds) suggests the citation is misleading or the landing page isn’t matching user intent.

How to track it:

- UTM tagging: Tag all internal links with

utm_source=ai_citation(or engine-specific:utm_source=chatgpt_citation,utm_source=claude_citation). This requires coordination with content - when a piece is likely to be cited, ensure it has proper tracking. - Referrer analysis: In Google Analytics, filter for referrers like

chatgpt.com,claude.ai,gemini.google.com,perplexity.ai. Compare their traffic quality to organic search. - Conversion tracking: Set up conversion goals (signup, demo request, purchase) and attribute them to AI citation traffic.

Benchmark: AI citation traffic should have time-on-page comparable to or better than organic search (2-4 minutes is typical). Bounce rate should be under 40%.

Interpretation: If AI citation traffic has a 60% bounce rate but organic search is 35%, the landing page may not be optimized for the queries driving AI citations. If conversion rate is 2x higher from AI citations, that’s a signal to invest more in GEO.

5. Competitive share - relative performance

What it is: A domain’s citation rate as a percentage of total citations on a query, across all sources.

Why it matters: Absolute citation rate (a domain is cited on 25% of queries) is useful, but relative performance is more actionable. If competitors are cited on 80% of those same queries, the domain is losing share. If they’re cited on 15%, the domain is winning.

How to track it:

- For each monitored query, count total unique sources cited across all AI engines.

- Calculate: (domain’s citations on that query / total citations on that query) × 100.

- Aggregate across all queries for a competitive share metric.

Benchmark: If competitive share is 15% and there are 10 competitors, that’s roughly equal distribution (10% each). 20%+ suggests outperformance. Below 10% suggests losing share.

Interpretation: Competitive share is a leading indicator of GEO effectiveness. If share is rising month-over-month while competitors’ shares fall, optimization is working. If it’s flat or declining, progress has stalled.

6. Citation freshness - recency of cited content

What it is: The age of content at the time it’s cited. A 2-week-old article cited today has high freshness; a 3-year-old article has low freshness.

Why it matters: AI engines may prioritize recent content for time-sensitive queries (news, product updates, market trends). Tracking freshness reveals whether content is being cited for its recency or its evergreen authority.

How to track it:

- Log the publication date of each piece of content that gets cited.

- Calculate average age of cited content: (sum of days since publication / number of citations) = average age.

- Track freshness over time: is the average age of cited content increasing (bad) or staying stable (good)?

Benchmark: For evergreen content (guides, how-tos), average age of 6-12 months is normal. For news or trend content, average age under 2 weeks is expected.

Interpretation: If average citation age is rising (12 months → 18 months), it may signal that new content isn’t being cited as quickly, or that older content is becoming stale. Refresh or republish high-performing older pieces.

7. Attribution window - lag to first citation

What it is: The time between publishing a piece of content and its first citation in an AI engine response.

Why it matters: SEO has a well-known indexing lag (days to weeks). GEO has its own lag. Understanding it helps forecast when new content will start driving visibility and informs content calendars.

How to track it:

- Publish a piece and monitor it daily for citations.

- Log the date of first citation.

- Calculate lag: (first citation date - publication date) = attribution window.

- Track average lag across multiple pieces.

Benchmark: Early data suggests AI engines cite content within 1-7 days of publication for high-authority sites, 7-30 days for mid-tier sites. Lag can extend to 60+ days for new or low-authority domains.

Interpretation: A 3-day attribution window means new content is visible quickly - teams can iterate and optimize based on early performance. A 30-day window means patience is required and rapid feedback loops aren’t possible.

8. Citation diversity - which engines cite content

What it is: The distribution of citations across different AI engines: ChatGPT, Claude, Gemini, Perplexity, Grok, etc.

Why it matters: Different engines have different user bases, query patterns, and citation algorithms. Being cited heavily on ChatGPT but rarely on Claude means missing half the AI search market. Diversity reduces risk - if one engine changes its algorithm, others compensate.

How to track it:

- For each monitored query, log which engines cite the domain’s content.

- Calculate citation rate per engine: (citations on engine X / total queries monitored on engine X) × 100.

- Track distribution: what percentage of total citations come from each engine?

Benchmark: A balanced distribution (20-25% from each of 4-5 major engines) is ideal. Heavy skew toward one engine (60%+ from ChatGPT) suggests optimization is engine-specific, not universal.

Interpretation: If a domain is cited on 30% of ChatGPT queries but only 10% of Claude queries, investigate why. Is the content format better suited to ChatGPT’s response style? Are there Claude-specific queries being missed?

9. Content type performance - what gets cited

What it is: Citation rates segmented by content type: blog posts, guides, case studies, whitepapers, product pages, research reports.

Why it matters: Different content types have different citation potential. AI engines may prefer long-form guides over short blog posts, or case studies over opinion pieces. Tracking this reveals which content formats are working.

How to track it:

- Tag each piece of content with its type in analytics.

- Calculate citation rate by type: (citations for type X / total content pieces of type X) × 100.

- Track which types appear most frequently in AI responses.

Benchmark: Comprehensive guides and research reports typically have higher citation rates (25-40%) than short blog posts (10-20%). Case studies and product pages vary widely.

Interpretation: If guides are cited 3x more often than blog posts, invest in longer-form content. If case studies aren’t being cited at all, either they’re not discoverable or they don’t match AI engine query patterns.

10. Visibility decay - how long citations persist

What it is: The rate at which citations for a piece of content decline over time after publication.

Why it matters: Some content gets cited heavily for weeks then drops off (trend content). Other content maintains steady citations for months (evergreen). Understanding decay helps prioritize refresh cycles and identify which pieces need updates.

How to track it:

- For each piece of content, track citation count weekly for 12 weeks post-publication.

- Plot citation count over time.

- Calculate decay rate: (citations in week 1 - citations in week 12) / citations in week 1 = decay percentage.

Benchmark: Evergreen content should show minimal decay (10-20% over 12 weeks). Trend content may decay 50%+ in the same period.

Interpretation: Rapid decay (50%+ in 4 weeks) suggests content is time-sensitive or losing relevance. Plan refreshes or updates accordingly. Stable citations (flat line) suggest content is evergreen and worth maintaining.

Building a GEO analytics stack

These 10 metrics form a complete GEO measurement framework. But implementing them requires tooling:

Manual tracking (spreadsheet-based):

- Monitor 50-100 queries weekly across 3-5 AI engines.

- Log citations, positions, and sources.

- Calculate metrics in a dashboard.

- Effort: 4-6 hours/week. Cost: $0.

Semi-automated (API-based):

- Use AI engine APIs (where available) to query responses programmatically.

- Parse responses to extract citations.

- Log to a database.

- Effort: 2-4 hours/week. Cost: $500-2000/month depending on query volume.

Fully automated (GEO platform):

- Zeover crawls AI engines, extracts citations, and calculates all 10 metrics automatically.

- Real-time dashboards, alerts, and competitive benchmarking.

- Effort: 30 minutes/week for review. Cost: platform subscription.

The metrics that matter most

For teams starting GEO analytics from scratch, prioritize in this order:

- Citation rate - the foundation. Without it, nothing else can be measured.

- Citation position - reveals whether citations are high-value or low-value.

- Query coverage - shows breadth of visibility and content gaps.

- Traffic quality - proves citations drive real business value.

- Competitive share - contextualizes performance against rivals.

The remaining five metrics (freshness, attribution window, diversity, content type, decay) are refinements that become valuable once the core five are in place.

Moving from measurement to action

Metrics are only useful if they drive decisions. Here’s how to act on each:

- Citation rate rising → optimization is working; double down on the tactics driving it.

- Citation position falling → content may be losing authority; refresh or update it.

- Query coverage gaps → create new content targeting uncovered queries.

- Traffic quality low → landing page doesn’t match user intent; revise or redirect.

- Competitive share declining → competitors are outperforming; audit their content and strategy.

- Attribution window long → new content needs time to be discovered; plan content calendar accordingly.

- Citation diversity skewed → optimize for underperforming engines; study their response patterns.

- Content type underperforming → shift investment to higher-performing formats.

- Visibility decay rapid → content is time-sensitive; plan refresh cycles.

The goal isn’t to track metrics for their own sake - it’s to close the loop between measurement and optimization. Each metric should trigger a specific action. If it doesn’t, it’s noise.

What’s next

GEO analytics is still evolving. As AI engines mature and standardize their citation practices, measurement will become easier. But the fundamentals - citation rate, position, coverage, traffic quality, and competitive share - will remain the core of any GEO strategy.

Start with citation rate and position. Build from there. The brands that measure GEO rigorously today will be the ones that dominate AI search visibility tomorrow.

Zeover tracks all 10 of these metrics across ChatGPT, Claude, Gemini, Perplexity, and Grok, with real-time dashboards and competitive benchmarking. If manual tracking feels overwhelming, that’s what the platform was built to solve - so teams can focus on optimization instead of spreadsheets.