Automating the Content Workflow - When AI Generation Earns Its Place (Part 5 of 5)

Content Marketing GEO Workflow

Scaling content without sacrificing quality requires a workflow AI and humans can both live inside. Zeover generates content tuned to the brand’s governance document, runs it through consistency and citation-readiness checks, and tracks how each piece performs across ChatGPT, Claude, Gemini, Grok, and Perplexity. Publish faster without losing the signal. Run a content-generation audit.

Content operations in 2026 are running two experiments in parallel. The first treats AI as a volume engine: generate ten posts a week, publish them all, hope traffic follows. The second treats AI as a production tool: draft faster, edit heavier, publish fewer pieces at a higher bar. The traffic data from 2025 and 2026 is conclusive about which experiment wins. Sites that published 50 to 100 AI-assisted pieces with meaningful human editing saw traffic climb. Sites that published a thousand or more AI pieces with no editing saw traffic drop sharply. The difference isn’t the tool. The difference is the workflow.

The practical question of how to use AI in marketing without losing citation rate collapses into a workflow question, not a tooling question. The teams that picked the right workflow in 2025 are pulling away from the teams that picked the wrong one, and the gap is now showing up in cross-engine citation share, not just in Google traffic. This piece sits inside a broader AI marketing strategy where content production is one input alongside governance, machine readability, and benchmarking - the four disciplines a Generative Engine Optimization program runs simultaneously.

This is Part 5 of a five-part series on content marketing strategy for the AI era. Part 1 argued that content matters more, not less. Part 2 covered governance. Part 3 covered machine readability. Part 4 covered cross-engine benchmarking. Part 5 closes the series on the workflow that connects all four to a production cadence, and the build-vs-buy decision that determines whether a platform carries the workload or a spreadsheet does.

TL;DR

- AI generation earns its place in the workflow when it drafts against a brief, draws from a governed source-of-truth document, and goes through an editor before publishing. It loses its place when it replaces the editor.

- Google’s own position is that it does not penalize AI content, it penalizes low-quality content, and AI engines treat the same distinction in their citation decisions.

- The three production patterns that work in 2026 are brief-first AI drafting, AI rewriting of human outlines, and AI-assisted fact-checking. Fully autonomous content generation with no human in the loop is the pattern that destroys citation rates.

- Readiness signals for investing in a content-generation or GEO platform include ten-plus monthly posts across producers, multi-brand or multi-location footprints, and the explicit need for cross-engine benchmarking. Below those thresholds, a spreadsheet and a ChatGPT subscription cover the work.

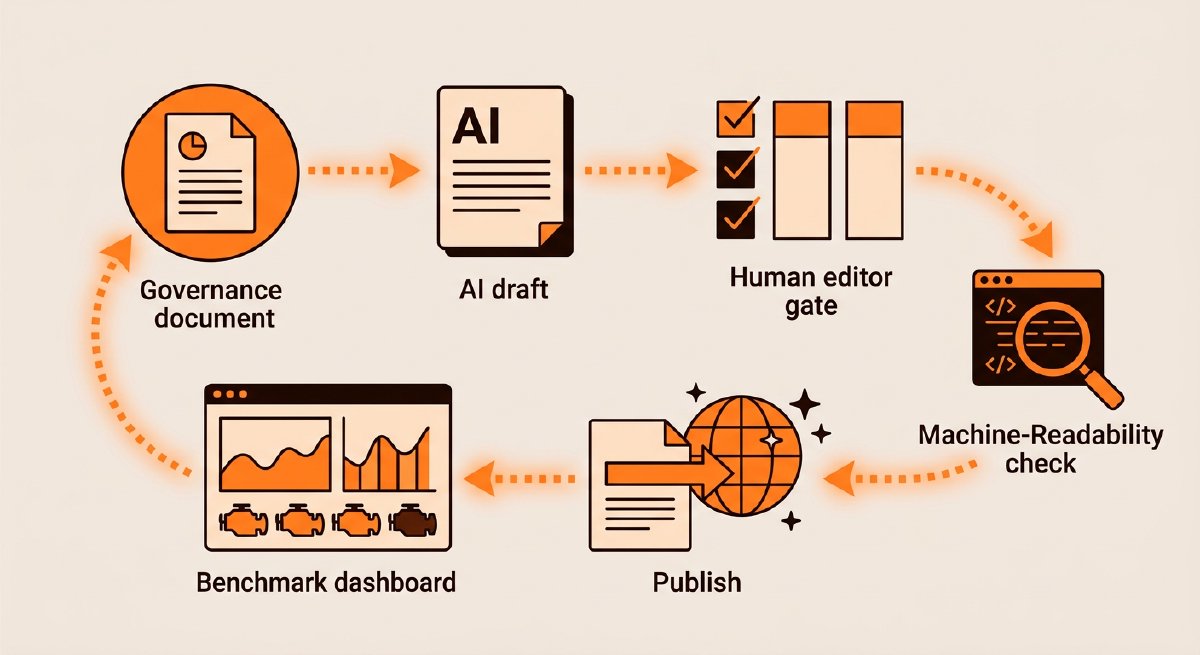

- The close-the-loop workflow pattern is: governance document → AI draft against a brief → human edit → machine-readability check → cross-engine benchmark post-publish → report into the next brief. Every step in the series connects to the next.

Where AI Generation Earns Its Place

AI generation earns its place at the drafting layer, not the editorial layer. The distinction matters because traffic data from 2025 through early 2026 shows a stark divergence: sites treating AI as a drafting assistant saw traffic increases of 30 to 80 percent over the year, while sites using AI as an unattended publishing engine lost 40 to 90 percent of traffic in the same window. Google’s own position, documented in the Helpful Content guidance, is that it assesses quality regardless of how the content was produced. The penalty is for unhelpful content, not for AI-origin content.

Three AI generation patterns work reliably in 2026:

Brief-first AI drafting. The content owner writes a brief that includes the target query, the positioning sentence, the key claims, and the citable statistics the piece must include. The AI drafts against the brief. A human editor revises for voice, accuracy, and the paragraph contract (see Part 3). The finished piece publishes. This pattern inherits the quality signal of the editor while capturing the speed of the AI.

AI rewriting of human outlines. A human writes the outline and section leads. The AI fills in the paragraph-level prose. The same editor reviews. This variant produces stronger voice consistency at the cost of slightly more human time up front.

AI-assisted fact-checking and refresh. The AI scans the existing content library for stale facts (outdated statistics, superseded product names, deprecated customer examples) and flags the passages for human revision. This pattern pays for itself fast because most brands have more stale content than they can update manually.

Where AI Generation Fails

Three anti-patterns recur in the 2026 post-mortems of failed AI content programs:

Autonomous publishing. The AI drafts and publishes without a human editor. The traffic collapse happens in two phases: Google’s quality signal catches the low-signal pages first, then AI engines stop citing the domain because the volume of low-quality pages dilutes the brand entity signal. Both are slow failures, so the team doesn’t notice until the quarterly benchmark shows a 40% or larger decline.

Prompt-level outsourcing with no governance input. Writers or agencies use ChatGPT with generic prompts (“write a 1,500-word blog post about X for a B2B audience”), ship the output with light editing, and treat the result as a draft. The output reads like the average of every other company in the category, which is exactly the problem. AI engines are looking for the signal that distinguishes the brand, and generic prompts produce generic output.

Volume-over-quality scaling. A marketing team staffs a 3x or 5x content volume target because “AI can handle it.” The volume target is the KPI rather than the citation rate. Within a quarter, the content inventory is polluted with near-duplicate pages that confuse both Google’s crawler and AI engines’ entity resolution. The remediation cost exceeds the benefit of the original volume.

The common thread across all three is that the AI replaced the editor, not the typing. The workflow that works keeps the editor in the loop and replaces the typing.

The Close-the-Loop Workflow

A content operation that actually connects governance, machine readability, benchmarking, and production ends up with a single workflow that cycles on a monthly cadence:

- Brief creation. The content owner pulls the positioning sentence, customer categories, and canonical numeric facts from the governance document (Part 2) into a brief. The brief specifies the target query, the expected citation behavior (which engines, which competitors), and the machine-readability requirements (schema type, entity page, internal linking).

- Draft production. A writer, agency, or AI assistant produces a draft against the brief. The path chosen depends on topic complexity, editorial depth required, and production capacity.

- Editorial pass. A human editor reviews against the governance document and the paragraph contract (Part 3). Inconsistencies get flagged. Stale claims get corrected.

- Machine-readability validation. Schema is added or verified. Heading hierarchy is checked. Entity page internal links are confirmed. The page passes a pre-publish automated check before going live.

- Publish. The piece goes live on a canonical URL with correct metadata.

- Benchmark sweep. The cross-engine benchmark runs on the next cycle (Part 4), capturing whether the piece earned citations and from which engines.

- Report into the next brief. The benchmark data feeds the next cycle’s briefs, prioritizing prompts the brand lost and deprioritizing those it already owns.

Every piece in the series connects. The benchmark data from Part 4 changes the briefs in Part 5. The machine-readability checks from Part 3 block publication if the piece isn’t cite-ready. The governance document from Part 2 is the input to every brief. The strategic framing from Part 1 justifies the whole cycle to the CFO who approves the headcount.

When to Invest in a Platform

The build-vs-buy question is the last strategic decision in this series. It matters because the workflow above is possible to run manually, but expensive to run manually past a certain volume.

Readiness signals for investing in a GEO or content platform:

- Ten or more posts per month across multiple producers. Below that volume, a spreadsheet and an editor cover the governance work. Above it, consistency checking becomes a bottleneck.

- Multi-brand or multi-location footprint. Every brand or location is a separate entity that needs independent governance, machine-readability, and benchmarking. Manual tracking breaks around three brands or twenty locations.

- Explicit need for cross-engine benchmarking. Running the five-engine benchmark from Part 4 manually costs 10 to 20 analyst-hours per cycle. Automated platforms compress that to minutes.

- Multiple stakeholders reviewing the benchmark data. CMO, head of content, product marketing, brand, and an agency all consuming the same data is a sign the data needs a persistent dashboard, not a monthly PDF.

- The brand’s AI citation rate is materially below competitors. Closing a gap requires faster iteration than a quarterly manual cycle can support.

Below those thresholds, the right answer is a scrappy toolkit: the governance document lives in Notion, the briefs in Docs, the benchmarks in a spreadsheet, and the drafts in any AI tool the team already uses. The cost of adopting a platform is higher than the return until the volume is there.

Above those thresholds, the math reverses. A platform that already crawls the brand’s public surface, tracks citations across five engines, confirms machine-readability, and creates on-brand drafts against the governance document compresses the workflow cycle from weeks to days. The analyst time saved pays for the platform within a quarter for most mid-sized operations.

What the Platform Should Do

For organizations ready to invest, the capability checklist for an AI marketing platform worth adopting (vendors variously call this category a Generative Engine Optimization platform, a GEO platform, or just AI marketing tools - same job, different labels):

- Continuous cross-engine benchmarking. Citation rate, sentiment, and summary accuracy tracked across ChatGPT, Claude, Gemini, Grok, and Perplexity on at least a weekly cadence without analyst setup per cycle.

- Brand consistency auditing. Automated crawl of owned and third-party surfaces, with flags when product descriptions, customer categories, or numeric facts contradict the governance document.

- Machine-readability validation. Automated checks for schema coverage, heading hierarchy, canonical URLs, and llms.txt presence, with remediation recommendations.

- Draft generation tuned to the governance document. The AI draft pulls from the locked positioning sentence, approved claims, and canonical numeric facts, not from a generic model with no brand context.

- Integration with existing editorial workflow. The platform does not replace the CMS, the editorial review tool, or the analytics stack. It slots into them.

A platform that does three of those five is worth assessing. A platform that does all five is the target for mid-sized and enterprise operations.

What to Do First

For marketing leaders reading this series and wondering where to start, the order of operations for the next 90 days:

- Write the governance document (Part 2). Two to four days of focused work with the head of content and the head of product marketing.

- Run a machine-readability audit on the top 20 content pages and fix the schema and heading gaps (Part 3). One to two weeks.

- Set up a five-engine benchmark on a 20-prompt commercial set (Part 4). One week to define prompts; ongoing monthly cadence after that.

- Implement the close-the-loop workflow for new content (Part 5, this piece). Start with the next piece scheduled, not with a retroactive rollout.

- Assess platform options only after the workflow is running manually for one full cycle. The platform is an optimization of a working process, not a substitute for not having one.

Four weeks of work produces a content operation that’s materially better tuned for AI-mediated discovery than what most of the category is running in 2026.

Closing the Series

The thesis of this series has been that content marketing strategy matters more in the AI era, not less, because the content a brand ships is the raw material AI engines turn into recommendations. Governance, machine readability, benchmarking, and a workflow that connects them are the four disciplines a modern content operation runs. Each one reinforces the others. None of them is optional for a brand that wants to show up in the answers qualified buyers are getting from ChatGPT, Claude, Gemini, Grok, and Perplexity in 2026 and beyond. The same four disciplines anchor a coherent AI marketing strategy at the operational level - the layer most playbooks skip in favor of vision slides.

The operations investing in these disciplines this quarter compound through 2026 and 2027. The operations that treat content as a commodity output lose visibility share to competitors who did the work. The gap between the two groups is widening every benchmark cycle.