What's Not GEO - Indirect Prompt Injection and Why Working With LLMs Beats Tricking Them

GEO Trust & Safety AI Strategy

Real GEO is what makes brands citable through channels engines welcome. Zeover keeps brand data consistent across every public surface, generates content in formats AI engines extract cleanly, and notifies the major LLMs through the entity-update channels each one documents - never through hidden text, planted prompts, or any other tactic the engines are now actively detecting. See how the legitimate playbook works.

On April 23, 2026, Google’s security team published the results of an open-web scan measuring how widespread adversarial prompt injection has become on the pages AI agents crawl. The headline number: a 32% relative rise in malicious activity between November 2025 and February 2026, across a dataset of 2-3 billion pages per month. The mechanism: people hiding instructions on ordinary web pages so that an AI agent reading the page silently obeys them.

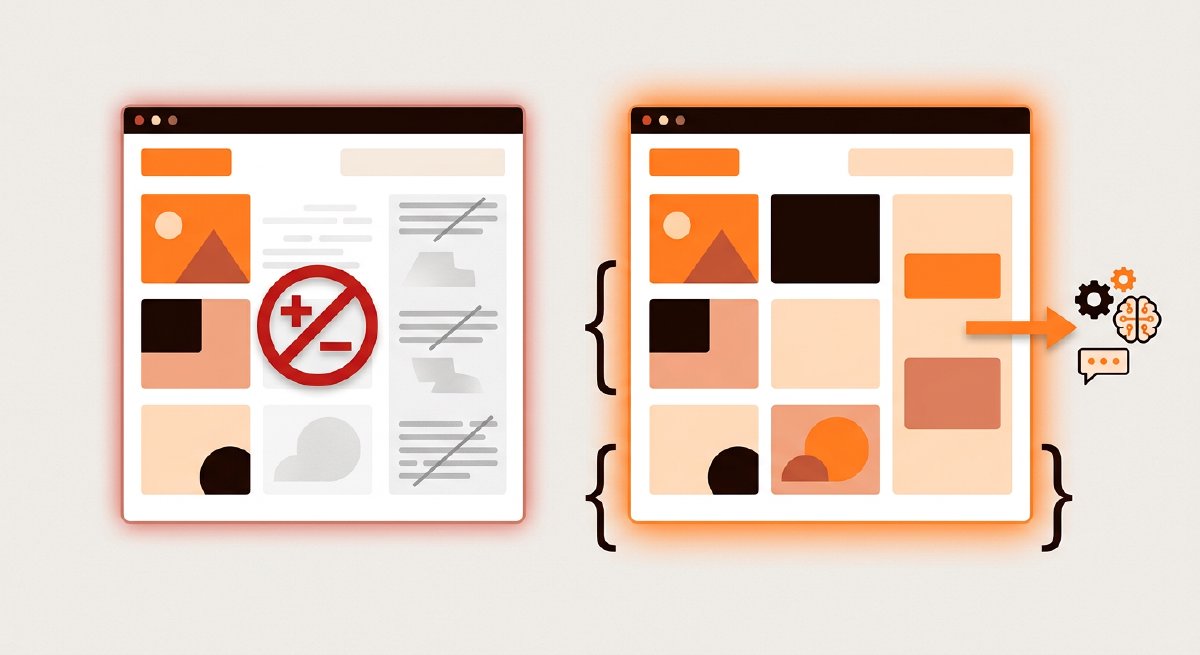

This isn’t aggressive SEO. It’s not even what most of the marketing-tools market would call black-hat GEO. It’s a different category, and the reason it matters editorially is that the line between legitimate GEO and the techniques Google is now actively detecting has narrowed in vendor pitches faster than it has in reality. Operators who don’t know the difference get sold tactics that work for one quarter and produce penalties that take three quarters to claw back. The shape of legitimate GEO is the opposite of the shape Google’s security team just called out. That contrast is worth being explicit about.

What Google reported

The pattern is called indirect prompt injection. An attacker plants instructions on a page; an AI agent reading the page treats those instructions as part of the user’s request and acts on them. The instructions are usually invisible to a human reader. The AI sees them anyway because it processes the underlying HTML, CSS, and metadata.

The tactics Google’s scan caught in the wild include:

- White-on-white text sized down to roughly one pixel, hiding instructions in plain sight on a page that visually looks normal.

- CSS-hidden elements using

display: noneor near-transparent color values to keep the text rendered for crawlers but not for visitors. - Zero-width Unicode characters embedded between visible characters, encoding hidden instructions invisible to the reader’s eye.

- HTML comment payloads placed in source code and metadata fields like meta descriptions, alt attributes, and structured data fields that engines read but visitors don’t see.

Help Net Security’s coverage of the report noted that the targets aren’t just chatbots. Indirect prompt injection affects any agent that browses and summarizes web pages, indexes content for RAG pipelines, auto-processes metadata, or reviews pages for moderation or ranking purposes. Researchers tracking the same pattern identified 10 distinct payloads in the wild aimed at financial fraud, data exfiltration, and API key theft.

Why this matters for marketing teams

Most marketing operations would never knowingly do any of this. The risk isn’t deliberate adversarial behavior; it’s accidental adjacency. Some “AI optimization” vendors and agencies pitch tactics that walk close to this line: stuffing schema fields with content that doesn’t appear on the page, embedding hidden instructions in alt text or meta descriptions, structuring HTML comments to bias how AI engines summarize the page. The pitch is usually framed as “helping the AI understand the page better.” Google’s security team just published a list of techniques that Google’s own crawlers are now actively detecting and downweighting.

Operators who buy these tactics get short-term wins. The medium-term consequence, now that detection is published and improving, is one of the engines deciding the page is adversarial and downweighting it for everything, not just the manipulation. The brand pays a long-tail cost for a quarter of artificial lift.

The line between this and legitimate GEO isn’t subtle once it’s named. Anything visible to a human reader on the page is fair game for the engine to extract. Anything invisible to the human but readable by the crawler is in the territory Google just called out.

What legitimate GEO actually looks like

Legitimate GEO is the opposite shape across every dimension Google’s report flags:

Visible content, not hidden. The substantive material engines extract should be the same material a human reader sees. Schema markup that describes content already on the page is fine; schema fields stuffed with content that doesn’t appear in the rendered article is the territory Google’s report covers.

Signal through documented channels, not embedded payloads. When the brand’s data changes - a new location opens, a product launches, a positioning shifts - the engines all publish documented update channels for entity changes. Using those channels is how the chain we wrote about in the GEO for Restaurants piece went from one-location visibility to first-position recommendations across three of its core cities. No hidden text, no planted instructions. Just consistent boilerplate plus a notification through channels the engines welcome.

Substance, not adjectives. Per the AI Press Releases piece, the content that AI engines cite is the content with verifiable facts, primary-source links, and specific numbers. Engines extract from what they can verify; manipulation tries to short-circuit verification.

Earning citations, not engineering them. Legitimate GEO produces content that genuinely answers a question better than the alternatives. Adversarial GEO tries to bias the engine into saying something the content alone wouldn’t earn.

The contrast is worth naming because some operators conflate the two. They aren’t the same category. One compounds across years; the other gets caught and reversed within quarters.

How engines detect the difference

Google’s security blog post is an unusual artifact for one specific reason: it’s a published account of detection methods working at scale across 2-3 billion pages monthly. That kind of detection doesn’t disappear. It improves. The economics of indirect prompt injection are now firmly negative for any operator paying attention to a multi-quarter horizon.

The detection patterns Google described aren’t exotic:

- Comparing rendered visible text against the underlying HTML reveals invisible payloads.

- Comparing schema fields against the page’s actual content surfaces stuffing.

- Cross-referencing metadata against rendered content catches HTML comment payloads.

- Pattern-matching against known prompt-injection phrasings catches the unsophisticated cases.

A brand following the legitimate GEO playbook passes all of these checks by design. There’s nothing hidden to detect. The schema describes what’s on the page. The metadata reflects the rendered content. The boilerplate matches the brand’s actual claims.

The competitive moat hidden in the cleanup

The pragmatic implication for marketing leaders is that the gap between legitimate and adversarial GEO is now a competitive moat, not just an ethics question. Brands that are clean are also durable. Brands that aren’t are sitting on a stock of accumulated risk that detection will eventually realize.

For operators who want to audit their own situation, three checks cover most of the territory:

- Pull the rendered text on the brand’s top 20 pages. Compare against the HTML source. Any text in the source that doesn’t appear rendered is worth investigating, regardless of who recommended it.

- Read the schema on those pages. Confirm every claim in the schema corresponds to content visible on the page itself.

- Audit the metadata fields (meta descriptions, alt text, structured data fields) for content that doesn’t reflect the rendered article.

If those checks pass, the brand is on the legitimate side of the line. If they don’t, the fix is pre-emptive: clean up before detection forces it. The cost of the cleanup is small. The cost of being on the wrong side when an engine downweights the domain is significant and recovers slowly.

What this means for buyers picking platforms

The line between legitimate and adversarial GEO is also the line buyers should walk when picking AI marketing tools, AEO marketing tools, marketing automation platforms, or content generation solutions. Vendors pitching tactics that depend on what humans don’t see - schema fields stuffed with content not on the page, hidden alt text, AI-targeted instructions in metadata - are pitching short-term gains and long-term penalty risk. Vendors pitching tactics that work through the channels engines document - entity updates, structured data that matches rendered content, content tuned for citation by being substantive rather than concealed - are pitching durable visibility.

For marketing leaders evaluating tools for growth marketers, AI content marketing solutions, or any AI marketing platform claiming to “help the AI understand the page better,” the diligence question is the one engines now ask of pages: is the tool helping the brand surface what it actually has, or helping it surface what it doesn’t? AI marketing strategy that compounds across years runs on the first answer. The second answer compounds against the brand within quarters once detection improves.

Improving brand visibility in AI engines is unromantic work. Consistent data across surfaces, content with substance, signal through documented update channels. It looks the same whether the operator does it manually, through an agency, or through a platform. What changes is which side of the line the operator’s chosen tactics put them on when Google’s security team publishes the next scan.

Working with LLMs is the durable position

The thread that runs across Zeover’s work, and across the editorial we’ve published this month, is the same: the engines are not adversaries to be tricked. They publish update channels for entity data. They document what they extract and how. They reward content with substance and signal-rich structure. The legitimate playbook is to use those channels and produce that content. The illegitimate playbook is now also a published list of techniques being actively detected.

The choice between them isn’t about ethics in the abstract. It’s about which approach earns citations that hold over multi-year horizons. Adversarial GEO is a borrowed sword. Working with the engines is the only one durable enough to keep.