Daily Benchmarks and Fast Iteration for AI Marketing (Part 3 of 6)

AI Strategy GEO Measurement

Running AI marketing strategy without a daily benchmark is flying blind. Zeover runs your priority queries across ChatGPT, Claude, Gemini, and Grok on your chosen cadence so your team sees movement the day it happens. Start measuring.

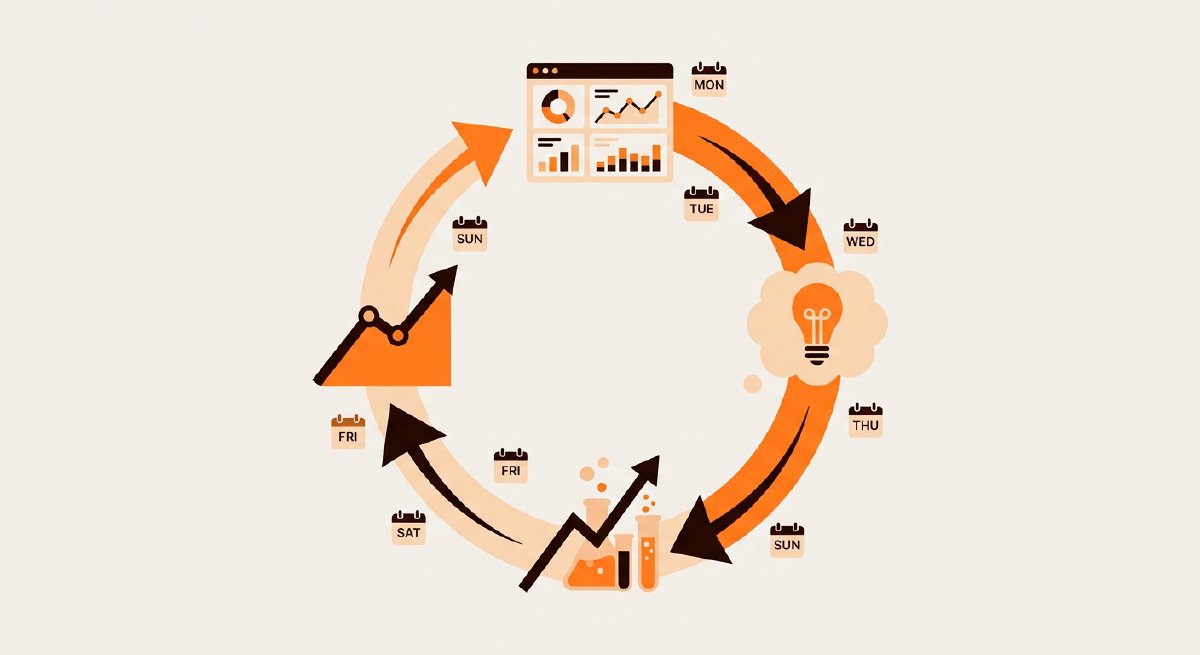

This is part three of the CMO playbook series. Parts one and two covered the mandate and the board-level framing. This part is about the operational rhythm underneath - the daily benchmarks, the weekly iteration, and the monthly reviews that turn AI marketing strategy from a quarterly initiative into a continuous practice.

Most marketing teams are built for campaign rhythm: plan quarterly, execute, measure at the end. AI marketing doesn’t reward that cadence. The underlying medium changes faster than quarterly, and the brands winning are running short feedback loops with small, attributable changes every week.

TL;DR

- Daily: track visibility delta on your top 10 priority queries across the four major AI engines. One dashboard, one glance, no more than two minutes.

- Weekly: review the delta trends, form one hypothesis, ship one specific change. Single variable per week, measured in isolation.

- Monthly: full benchmark review including share of voice vs. competitors, content output vs. plan, and any AI model updates that may have shifted citation patterns.

- Quarterly: strategic review. Are your priority queries still the right ones? Are your competitors the ones you thought they were?

- Don’t iterate faster than measurement can resolve. Early signals show up in 2-4 weeks. Weekly hypothesis testing is the fastest meaningful cadence.

Why Speed Matters

AI engines update their underlying models, retrain on fresh data, and shift citation patterns continuously. ChatGPT reached over 900 million weekly active users in early 2026 per TechCrunch. Gemini passed 750 million monthly active users around the same time. The pace of model shipping at the major labs means what worked in Q1 may not hold by Q3.

A marketing team running quarterly benchmarks on AI visibility is effectively flying blind. Three months is long enough for a competitor to publish a press release, get widely indexed, and move from zero to top-recommendation on a query you thought you owned. By the time your quarterly report surfaces it, the horse has left the barn.

The fix is matching the speed of iteration to the speed of the medium. Daily visibility tracking at the signal level. Weekly structured iteration on one variable. Monthly and quarterly for direction, not tactics.

The Daily Pulse

The daily check should take the CMO or marketing lead less than two minutes. The goal isn’t deep analysis - it’s noticing when something moves outside normal range so you can dig in.

What to put on the daily dashboard:

- Top 10 priority queries by business value (the ones the board cares about).

- Visibility status across each of ChatGPT, Claude, Gemini, and Grok. Mention rate per engine.

- Delta from yesterday flagged in color when movement exceeds a threshold (say, 10%).

- New competitor mentions highlighted when a brand that wasn’t in the response last week now is.

You’re not doing statistical analysis on a single day’s data. You’re building intuition and catching sudden drops. A 30-point drop in Grok citations for a priority query on a Tuesday is worth investigating Tuesday, not waiting until next month’s report.

The two-minute discipline is important. If the dashboard takes longer, marketing leads stop looking at it. Two minutes is the time between meetings.

The Weekly Iteration Loop

Weekly is where the actual work happens. The loop:

1. Review the week. What moved? What didn’t? Which queries drifted? Any sudden appearances or disappearances? This is 20 minutes at the start of the week.

2. Pick one hypothesis. Based on what moved, pick one variable to change. “We think adding FAQ schema to our pricing page will move us into ChatGPT’s response for [priority query].” Hypothesis, not to-do list. One, not five.

3. Ship the change. Small enough to attribute. Don’t batch five changes into one release and then try to figure out which caused movement four weeks later. The whole point of single-variable changes is attribution.

4. Wait. Early signals appear in 2-4 weeks. Shipping another change on the same page before the first change has time to show effect will confound the measurement. Resist the urge.

5. Re-measure in 4-6 weeks. Did the targeted query shift? By how much? Did anything else shift in ways that might be related? Record the outcome in a running log - the team that keeps the best record of what worked and what didn’t has the biggest compounding advantage over time.

6. Generalize. Changes that worked on one query are worth applying to related queries. Changes that didn’t work aren’t worth repeating without a revised hypothesis.

What to Iterate On

The variables worth testing against AI visibility, ranked by typical impact:

Technical signals (biggest, fastest-moving). Unblocking AI crawlers (GPTBot, OAI-SearchBot, ChatGPT-User, Google-Extended, ClaudeBot, PerplexityBot), adding llms.txt, and implementing schema markup on priority pages. These are one-time fixes with compounding returns.

Content additions. Publishing a new substantive piece targeted at a priority query. Depth beats breadth; one strong piece moves the needle more reliably than five thin pieces.

Content rewrites. Rewriting an existing page for machine readability - declarative opening sentences, self-contained sections, clean heading hierarchy. The KDD 2024 GEO paper found techniques like adding quotations (+41%), statistics (+33%), and source citations (+28%) each produced measurable visibility lift.

Brand boilerplate consistency. Aligning LinkedIn, press boilerplate, directory listings, and site boilerplate. This is a quiet lift that shows up weeks later as AI engines detect that cross-channel signals now agree.

Earned media push. Pitching a specific trade publication or industry newsletter on a specific story. The piece has to be genuinely useful to get placed; the AI visibility lift is a byproduct of good press strategy.

Variables that don’t move the needle fast enough for weekly iteration:

- Broad brand campaigns (slow, hard to attribute)

- Social post volume (minimal direct AI citation impact outside Grok for X)

- Paid media (doesn’t directly affect organic GEO)

The Monthly Review

Monthly is for zooming out. Full benchmark across all tracked queries, not just top 10. Share of voice vs. named competitors. Content output against plan. Any AI engine model updates that may have shifted citation patterns.

The monthly review needs one specific question answered: is our overall share of voice trending in the right direction? If yes, keep iterating on the current playbook. If no, a bigger reset may be needed than one variable change per week will produce.

The monthly review is also where you should check for AI engine model updates. When OpenAI ships a major GPT update, citation patterns can shift within weeks. When Google rolls out a new Gemini model, the Google AI Overviews behavior changes. These are industry-level events that affect everyone and can mask the impact of your specific changes. Noting them in your running log keeps future-you from mis-attributing a month’s movement to your own work.

The Quarterly Reset

Quarterly is strategic, not tactical. Three questions:

Are our priority queries still the right ones? Markets shift. New competitors emerge. Product launches create new query opportunities. Audit the tracked query list quarterly and drop what’s no longer relevant, add what’s newly important.

Are our competitors still the ones we thought? AI-search competitors are often different from your traditional marketplace competitors. Quarterly, check which brands AI engines recommend alongside or instead of you, and update your competitive set.

Is our strategy working at the level we projected? Compare actual share-of-voice movement to the targets you committed at the last board meeting. Be honest. If you promised 15% to 30% and delivered 15% to 22%, that’s a miss, and understanding why is more valuable than explaining it away.

When the Models Update

AI engines ship meaningful changes on their own schedule. An OpenAI GPT version bump, a Gemini model launch, a Claude update - each can shift what the engine cites overnight. Your measurement needs to tell the difference between your actions moving the needle and the model moving.

Practical signals of a model update affecting your visibility:

- Broad shifts across many tracked queries at once (a specific content change wouldn’t produce that).

- Shifts on platforms you haven’t changed anything for.

- Industry news about the provider shipping something.

When you spot one, don’t iterate on your tactics until you’ve re-baselined. Give the new model a week of observation before interpreting the numbers. Otherwise you’ll chase a signal that isn’t about your work at all.

How Zeover Runs This Cadence

Zeover is designed for the daily/weekly/monthly/quarterly rhythm. Define your priority queries once, and the platform:

- Runs the benchmark across ChatGPT, Claude, Gemini, and Grok daily (or whatever cadence you pick).

- Surfaces visibility deltas, share of voice shifts, and competitor movements automatically.

- Flags likely model-update events so you can distinguish signal from noise.

- Links detected gaps to specific remediation recommendations.

For CMOs, the point is that the daily/weekly discipline isn’t something your marketing team has to build from scratch. The measurement and alerting layer runs in the background. Your team focuses on the hypothesis formation and the change execution - the parts that actually produce movement.